Regulation of AI in Prior Authorization and Claims Review: A Look at Federal and State Consumer Protections

Introduction

Rapid technological developments in artificial intelligence (AI) have resulted in growing public attention to the potential benefits and challenges of these developments as they relate to health care. The Trump administration recently released A National Policy Framework for Artificial Intelligence (“AI Framework”), a set of legislative recommendations that could jump-start congressional activity on the application of AI across a variety of policy areas, not just health care. A core part of the AI Framework emphasizes establishing federal AI policy that preempts many state AI laws to reduce barriers for deploying AI applications. Preemption could nullify state consumer protections governing the use of AI in health coverage, such as prior authorization, and claims review and appeals. This Issue Brief discusses the types of consumer protections for use of AI in prior authorization and claims review, describes the Trump administration’s AI Framework, and highlights areas to watch as Congress considers AI legislation.

Use of AI in Prior Authorization and Claims Review

The use of AI technology has been embraced by all participants in the claims review cycle: patients, providers, and insurers. The box below describes current uses of AI technology for each party involved in prior authorization and claims review. Prior authorization and claims review are related but distinct steps in the coverage review and reimbursement process (claims review cycle) where AI might be used. Prior authorization is a managed care tool that evaluates whether an item or service is covered by a health plan prior to a patient’s receipt of the care. Claims review is often associated with a determination by an insurer after care is provided about whether and how much to pay for the item or service. Both involve similar decision-making and consumer appeal rights.

The claims review cycle includes health plan decisions made before a patient receives care (prior authorization review), after the care is received (often called retrospective or post-claim review), and while a patient is receiving the care (called concurrent review). Where the medical necessity of a service is involved, the term “utilization review” is often used to describe this process (definitions differ across state and federal requirements).

Parties Involved in the Prior Authorization and Claims Reviews Process and Their Use of AI

Insurers

Health insurers and other third-party administrators (TPAs), such as pharmacy benefit managers (PBMs), use some form of automation to process the millions of health care claims they review each year. Automation broadly includes the use of algorithms. One definition describes algorithms as a “procedure or set of rules that is applied to a dataset to achieve a certain function or purpose.” Such algorithms, or decision trees, have been used to generate approvals for treatment and have existed in the health care administration for some time.

AI has gained attention in recent years for its use to improve the speed and efficiency of existing automated processes, learn from historical claims outcomes (i.e., claims information an insurer has from its enrollees), and predict coverage determinations based on past patterns. Technology companies are vying for insurers and TPAs to adopt their AI-related products with the promise of faster, more accurate claims review. According to a recent National Association of Insurance Commissioners (NAIC) survey of 93 insurance companies in 16 states, 84% of responding insurers across health care insurance product lines use AI or machine learning for a broad range of tasks such as utilization management practices, disease management programs, and prior authorization processes.

Health Care Providers

Providers—hospitals and clinicians—use AI to enhance their ability to prepare and submit health insurance claims for reimbursement from insurers and TPAs. AI tools are being added to health system “revenue cycle management” (RCM)—the processes used to manage health system financial operations and improve functions such as coding, insurance eligibility checks, and billing. For example, generative AI allows clinicians to create patient encounter summaries (using ambient scribes) that are automatically included in the patient’s electronic health record and moved across interoperative systems and generate content to accelerate the prior authorization and claims review process. The use of AI to create electronic records of patient visits can also allow providers to maximize payments for services by assigning billing codes that command higher rates.

Patients

These same AI systems can assist patients (and their doctors) in appealing a prior authorization or claim denial by, for example, using a patient’s medical information, health plan documents, and clinical guidelines to generate appeal letters and other documentation needed in the appeal process. Various entities are promoting these tools directly to patients; some services charge a fee, and others do not. In addition, recent efforts to enhance data interoperability have encouraged the industry to develop apps that patients can use to consent to the sharing of their health information for multiple purposes, including to help with prior authorization review.

Connection to Interoperability. Developments in technologies to enhance interoperable systems (electronic data sharing among plans, providers, and patients) may make data more readily available for the application of AI technology in prior authorization and claim decision-making. Federal regulations will soon require some health plans to implement application programming interfaces (APIs) to collect and share data among patients, plans, and providers in an effort to streamline and expedite prior authorization review. While this may be helpful to patients and providers, increased data sharing could also result in data being captured inappropriately and used for purposes that might not be allowed under current interoperability agreements, for example, for commercial sale and/or to train new AI tools.

Risks to consumers include the potential for inaccurate or biased outcomes and privacy breaches. AI systems can help insurers and TPAs triage and make coverage decisions, often without any human involvement in the process. Yet the nature of much of this decision-making requires an individualized, sometimes clinical, review of a patient’s unique circumstances. The use of an AI-based algorithm to aid in these decisions may limit full review of a claim when no human judgment is applied. Many insurers made a voluntary pledge in 2025 to have medical professionals review prior authorization denials that involve clinical issues. Still, the use of AI by an insurer or TPA in claims review, even for purely administrative, nonclinical tasks, might lead to incorrect predictions and decisions if the AI model’s data input is incorrect or missing key information. In the past few years, patients have brought class action lawsuits challenging the use of specific algorithms in claims denials, arguing that their denials were improper due to a failure to perform an individual assessment and a lack of transparency about the algorithms and underlying data used to train the AI tool. These cases are still moving through the courts.

Data used in AI tools, either obtained through a patient’s electronic medical record or uploaded by the patient, could create privacy and security risks that may not be protected under the Health Insurance Portability and Accountability Act of 1996 (HIPAA). HIPAA applies only to health plans, health care providers, and health care clearinghouses, not to the technology companies and other third-party entities that access health information. Patient information obtained through an interoperable electronic health records (EHR) system has been the topic of recent litigation, with an EHR technology company claiming that another company obtained patient data under false pretenses and sold it.

Furthermore, the reliability of an AI tool can be compromised when trained on biased data. For example, one study found that algorithms using health care costs as a proxy for health care needs greatly underestimated the needs of Black patients compared to White patients. Health care costs are often lower for Black patients because they have less access to care, not because they have less clinical need. In this case, treatment decisions based on such algorithms may exacerbate health disparities.

The Trump Administration’s AI Framework

Promoting AI development. While the Trump administration’s AI Framework contains few details and no recommendations specific to health care or insurance claims review, it broadly recommends expanding the use of AI and imposing only limited federal restrictions through existing agency structures and “industry-led standards.” For example, the AI Framework recommends legislation that would prevent the U.S. from “coercing technology providers, including AI providers, to ban, compel or alter content based on partisan or ideological agendas.” It also recommends that Congress authorize resources to make federal datasets accessible to “industry and academia” for training AI systems.

Preempting some state law protections while keeping others. A prominent part of the AI framework is its proposal that Congress develop national standards that preempt “cumbersome” state AI laws. This would create federal legislation that aims to stop or prevent these state laws from being implemented. The Administration suggests that these state laws result in a patchwork of different requirements that could restrict U.S. competitiveness. The AI framework also says that states should not be allowed to penalize AI developers for a third party’s “unlawful conduct” involving their models.

At the same time, the Administration recommends that any legislation “respect the principles of federalism” and not preempt traditional state policy power that allows states to enforce general state laws against AI developers and users, including laws to “protect children, prevent fraud, and protect consumers.”

This framework is consistent with earlier Trump administration actions, including a December 2025 Executive Order restricting state AI regulation and establishing a Department of Justice litigation task force to challenge state AI laws that conflict with federal policy. A July 2025 AI Action Plan (stemming from another Executive Order) included a recommendation that federal agencies not allow AI-related federal funding to states with “burdensome AI regulations.” The AI Action Plan was released shortly after congressional Republicans’ unsuccessful attempt to include a 10-year ban on state regulation of AI in the 2025 budget reconciliation law.

Significantly changing the previous administration’s policies. The Trump administration’s actions mark a significant shift in priorities and approach to AI policy from those of the Biden administration, which sought to establish federal safeguards for the use of AI in health care. The Trump administration rescinded a Biden-era Executive Order that set out an agenda for the development and use of AI “to protect American consumers from fraud, discrimination, and threats to privacy” and “promote safe and responsible” use in health care.

Federal and State Efforts to Regulate AI Use in Prior Authorization and Claim Review

Federal Regulation and Oversight of AI

Few federal standards apply specifically to the use of AI in the prior authorization and claims review process, but all coverage decision-making for both public and private coverage includes general standards intended to ensure reviews are fair, substantive, and timely. These standards are fragmented across federal agencies with separate oversight responsibilities for different health coverage markets.

For private employer-sponsored plans, the federal government, through the U.S. Department of Labor (DOL), oversees claims and appeals process requirements in the Employee Retirement Income Security Act (ERISA). ERISA generally exempts self-insured plans established by private employers from most state insurance laws, including claims review protections, and would likely preempt state AI laws that relate to the claims review process. Most workers with employer-sponsored insurance are in a self-funded plan, meaning that many consumers are not guaranteed state protections related to the use of AI in claims review, where they exist.

These ERISA claims and appeals rules were the basis for reforms applied across all private health coverage in the Affordable Care Act. These reforms established a federal floor of protections for the internal claims and appeals process for those with Marketplace and off-Marketplace private insurance and added an option for all consumers with private coverage to appeal denied claims through an “external review” by an entity independent of the plan.

ERISA requires all employer plan sponsors to ensure the “full and fair” review of all health claims. What “full and fair” means in the context of the use of AI tools in the claims process is yet to be interpreted through guidance or updated regulation. ERISA also contains “fiduciary” rules requiring employers and other fiduciaries to act in the best interest of plan enrollees and monitor vendors’ activities. While these standards might provide some protection to employees related to an employer plan’s use of AI, in practice, fiduciary standards have rarely been applied to employer health plans, and to date, enrollees have not been successful in advancing litigation to challenge employers for breaching their fiduciary duties related to the health plans they sponsor.

Still, one recent DOL case against a large TPA alleged a fiduciary violation and a failure to follow ERISA claims rules when the TPA automatically denied claims in bulk without making an individual medical necessity evaluation for each under the terms of the plan. While these allegations did not necessarily involve AI, the TPA allegedly used an automated process without human review to issue denials. This case was settled with the establishment of a fund to compensate enrollees for improperly denied claims.

Federal guidance specific to AI use in prior authorization and claims review in Medicare and Medicaid has been limited. Both programs have their own claims and appeal consumer protections under federal requirements (and some state standards also apply to Medicaid).

Medicare. 2023 Medicare Advantage regulations and additional 2024 guidance clarify that Medicare Advantage organizations cannot make medical necessity decisions using an algorithm or software that does not consider individual circumstances. Denials based on medical necessity must be reviewed by a health care professional. Regulations proposed in 2024 that addressed bias and discrimination in the use of AI by Medicare Advantage plans were not finalized by the Trump administration. Additionally, the federal government is testing the use of AI to make certain prior authorization decisions for specific services in traditional Medicare through its Wasteful and Inappropriate Services Reduction (WISeR) Model, contracting with AI technology companies to administer this pilot program in six states.

Medicaid. Current Medicaid regulations do not directly address the use of automation in prior authorization. Medicaid managed care regulations require that any managed care organization (MCO) decision to deny services be made by “an individual” with appropriate expertise, but do not explicitly address AI use. Through state managed care contracts (which are reviewed and approved by CMS), states can set requirements for plan performance and reporting, such as requiring plans to disclose the use of AI in prior authorization processes. The Medicaid and CHIP Payment and Access Commission (MACPAC) has recently issued draft recommendations on the use of automation in Medicaid prior authorization.

State AI Consumer Protections in Prior Authorization and Claims Review

In recent years, some states have advanced laws and regulations aimed at protecting consumers from possible harm stemming from algorithmic decision-making systems, such as privacy breaches, inaccuracies, and bias. AI-related legislation continues to be debated in almost every state legislature, with some efforts garnering bipartisan support. Some states have issued regulations and other guidance under existing laws instead of or in addition to new state laws.

State laws specify new and existing AI consumer protections. Some state laws contain wide-ranging protections meant to cut across different sectors of the economy and apply to a broad range of entities, such as developers and those who deploy or use the technology for business purposes. Other state laws are specific to industry sectors (e.g., health care), topics (e.g., employment, civil rights, education), or uses, such as utilization review in health insurance.

Broad state laws include those that prohibit unfair or deceptive acts and practices. All 50 states have broad consumer protection laws that prohibit unfair or deceptive acts or practices. These laws are enforced by state attorneys general, and sometimes also allow a consumer to sue directly for a violation of the law (a “private right of action”) instead of relying on the state alone to enforce it. Colorado and Utah are examples of states that have amended their consumer protection laws to provide for general AI consumer protections.

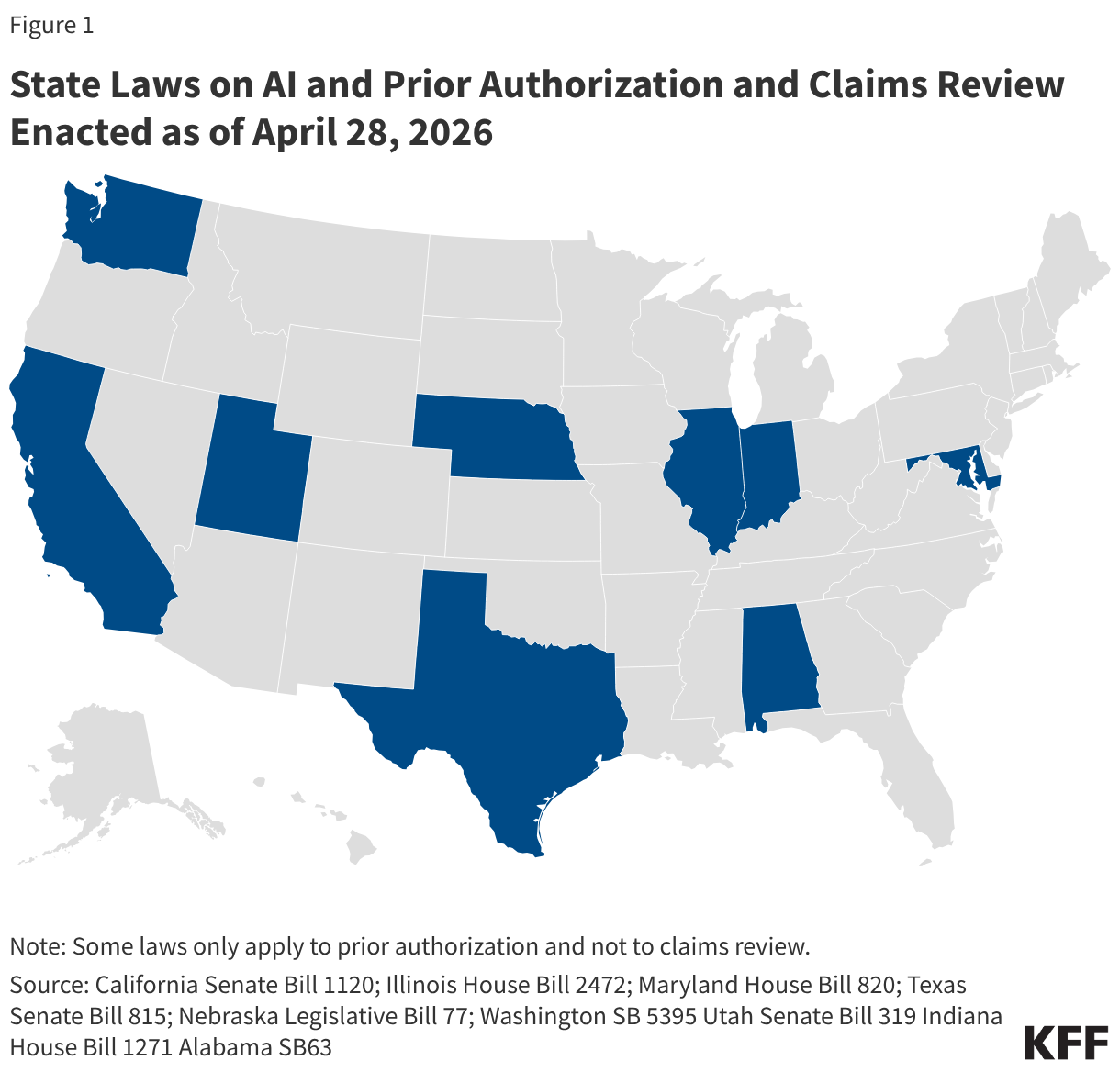

Depending on the specific state law, these broader consumer protection laws might be used to address consumer harm resulting from the use of AI in prior authorization and claims review. Additionally, a growing number of states have updated longstanding state health insurance standards for managed care related to utilization review to clarify how these rules apply to AI (Figure 1). Almost all of the laws are focused on the decision-making process of utilization review, sometimes defined under state rules as individualized decisions about whether a given service is medically necessary based on the patient’s individual clinical circumstances. These laws do not necessarily include administrative claim review decisions that do not involve a medical necessity determination, such as whether a claim is for care that is excluded under the plan.

Each state law related to the use of AI in prior authorization and/or claim review has its own unique requirements, but major themes include:

- Human review of claim denials required. Some state laws include a provision that only a licensed health care provider may issue adverse determinations (a denial) and that AI cannot be used as the sole decisionmaker. For example, Illinois law requires that only a “clinical peer” make an adverse determination based on medical necessity and does not allow the sole use of an “algorithmic automated process” to make these decisions.

- AI tools must take individual clinical circumstances into account. A couple of these states require that any AI tool used for utilization review bases its determination on an enrollee’s unique clinical history. Alabama, for instance, mandates that insurers who use artificial intelligence to make prior authorization determinations ensure that they base these decisions on an enrollee’s clinical history and clinical circumstances.

- Disclosure of AI use. A few of these states, such as Utah for example, require entities that use AI to conduct utilization review to disclose its use to the public, the state department of insurance, health care providers in their network, and each enrollee.

- Review of AI tool outcomes. Some state laws also require entities that perform utilization review to periodically review performance and outcomes of AI tools they use in order to check accuracy and reliability. California law requires that an AI tool be periodically assessed and revised to ensure maximum accuracy and reliability.

- Limits on the use of patient data to protect privacy. Several of these state laws include language that prohibits those conducting utilization review from using patient data beyond its intended purpose and contrary to HIPAA or state law confidentiality protections. Maryland law is one example.

- AI tools must be open to inspection, including the underlying algorithms. Some of these laws mandate that AI tools for utilization review be open to audit by regulators. In Texas, the commissioner is allowed to audit and inspect a utilization review agent’s use of an automated decision system for utilization review at any time.

- AI protections against bias and discrimination. A few state laws, such as Washington's, require that AI tools be applied “fairly and equitably” and cannot result in discrimination, either directly or indirectly, against an enrollee.

New state guidance aims to exercise state authority to regulate AI use. Some states have issued guidance to make clear how existing state legal protections apply to AI. For example, in 2024, the Massachusetts Attorney General released a public Advisory explaining how the state’s existing consumer protection, civil rights, and data privacy laws apply to developers, suppliers, and users of AI, and how they could impact consumers in Massachusetts.

Insurance regulators in some other states have taken a similar approach, issuing new guidance to clarify how existing state law applies to AI and provide more specific information to insurers about their obligations concerning the use of AI. As of early April 2026, at least 25 states have issued guidance based on a model bulletin adopted in 2023 by the National Association of Insurance Commissioners (NAIC). The model bulletin applies to all types of state-regulated insurance (not just health insurance) and addresses the use of AI across all aspects of the insurance life cycle, including claims administration and payment, fraud detection, product development, and rating and pricing. It establishes the expectation that consumer-facing decisions made or supported by AI systems comply with existing insurance laws and regulations, including protections against unfair trade practices and illegal discrimination. It also instructs insurers to adopt policies and procedures with specifics about how AI is used and to implement controls to mitigate the risk of adverse outcomes. It specifies that insurance oversight includes the ability of regulators to inquire about the development, deployment, use, and outcomes of any AI system or predictive model used by insurers or their third-party vendors, as well as request information about system validation, testing, and ongoing audits of AI systems.

Issues To Watch

Striking a balance between the advancement of technological innovation that might save time and money and preventing harm to consumers is not a new challenge. It has been at the heart of consumer protection law for decades. AI presents just the latest policy challenge that policymakers, regardless of party affiliation, are faced with. For health insurance claims review specifically, calls for additional transparency and oversight of the process are longstanding and predate the use of AI. Future congressional action on AI will likely be shaped by the following issues:

The role of state-level consumer protections. Whether the federal government can preempt the application of state consumer protection laws in this area is an open question. The Trump administration’s AI framework appears to acknowledge that certain state protections should continue to apply. A key issue in the development of any federal legislation will be deciding what state actions are consistent with states’ traditional role in overseeing health care and insurance and should be preserved, and which ones are best placed at the federal level for uniformity and consistency. Setting a clear framework for when state and federal protections can and cannot coexist will likely be part of the policy debate.

Some federal preemption provisions can create uncertainty and confusion for consumers. Ongoing legal battles, for example, about whether state pharmacy benefit laws apply to self-insured employer plans under ERISA preemption, are in the process of being clarified through court decisions. This leaves consumers in limbo about what protections they have. Given the rapid changes and risks associated with AI technologies, whether federal preemption is a workable approach for state AI laws is another open question.

Benefits and limitations of a national framework. A single federal standard that preempts most state protections could be easier to build consensus around and simpler for the public to understand, but the current deregulatory agenda for the federal government could mean lax oversight of fast-developing technology. A recent proposed interoperability regulation, for instance, would eliminate some federal certification standards for health IT developers, including those related to transparency of AI data sources and audit reporting.

On the other hand, federal agencies that have not played a role in AI and claims review in the past could increase oversight activities. For example, the Federal Trade Commission has some responsibilities for enforcing unfair and deceptive trade practices standards. Also, some have suggested that the Food and Drug Administration (FDA) should regulate the algorithms that health plans use to determine coverage in the same way the agency oversees AI used in medical devices through a premarket review and evaluation of these tools.

Evaluation of the impact of AI tools in prior authorization and claims review. Providers may use AI to enhance their billing and collection capabilities, and plans work in the opposite direction by using AI in claims review and audit to rein in spending, raising the question of how these tools impact costs to the health system overall. The challenge is to evaluate these tools in real time to determine whether the benefits of AI use in this area (and efforts to encourage its development under federal legislation) outweigh the risks. Access to information about the precise mechanisms of these tools is limited, and efforts to obtain information, for instance, about the AI tools involved in the CMS WISeR model, have resulted in litigation.

Assessment of risks to patients. The enthusiasm about AI technology that might assist consumers in navigating the complexity of insurance bills, claims, and appeals is sometimes tempered by concerns about the risks of incorrect information and claim denials, bias, and privacy and security. Privacy is a particular concern, given the limits of the federal HIPAA standards in reaching the technology companies involved in developing or implementing AI solutions. A KFF poll found that 77% of the public is concerned about the privacy of personal health information provided to AI tools.

In addition to the risk involved when a consumer enters their health information into an AI tool, improper access to this information to test or train AI is also a risk. The Trump administration’s AI framework urges Congress to provide resources to make “federal datasets” available to industry and academia. Concerns about the federal government accessing individually identifiable data from federal agencies to train AI models or for other purposes are growing, raising questions about what additional safeguards might be important in protecting consumers.