VOLUME 42

Officials Amplify Fluoride Concerns as Federal Review and State Restrictions Advance

Plus, Tracking AI and Content Moderation Developments

Highlights

The Environmental Protection Agency (EPA) has launched the next step in its accelerated review of fluoride safety as more states move to ban community water fluoridation. The review arrives as senior officials have amplified concerns about fluoride that go beyond what the current evidence supports.

The Monitor also explores several developments related to AI policy and content moderation, including the growing adoption of a practice called “generative engine optimization” (GEO) to influence what AI chatbots say. A new study suggests that the same techniques used to make accurate information more visible to AI may also make these tools more susceptible to false health claims.

What We’re Watching

Fluoride Safety Review Advances as States Move to Ban Water Fluoridation

The Environmental Protection Agency (EPA) has released a preliminary assessment plan and literature survey as the first phase in its expedited review of fluoride safety, acting on concerns largely based on misleading claims about harms. The review advances a priority of some in the Make America Healthy Again (MAHA) movement and accelerates a report not otherwise due until 2030. Water fluoridation reduces tooth decay by more than 25% in children and adults, and the scientific basis for concern is limited. A 2024 National Toxicology Program report suggested an association between fluoride and lower IQ in children, but the report analyzed studies conducted outside the U.S. at fluoride levels more than twice the American standard. Nevertheless, senior officials including HHS Secretary Robert F. Kennedy Jr. have amplified concerns about fluoride without adequate scientific support, and policy actions have followed. Florida and Utah have already banned community water fluoridation, with similar bills introduced in at least 19 other states. The FDA has also moved to restrict some fluoride supplements, the alternative that opponents to fluoridation have promoted, and dental professionals report growing reluctance among parents and providers to use them.

What To Watch Out For: The EPA review is still ongoing, but regardless of its findings, the process itself risks undermining public confidence in a longstanding and effective public health intervention. As the alternatives that opponents to community water fluoridation have promoted also face regulatory challenges, confusion may persist among the public about fluoride’s safety. KFF Health News reporting has shown an increase in emergency room visits for preventable tooth problems in recent years, a trend that could worsen as these narratives and policies continue to spread.

Unproven Claims About Ivermectin Shape Research Priorities and Legal Debates

The National Cancer Institute has begun a preclinical study of ivermectin to examine its potential effects on cancer cells, a decision that follows sustained public claims that the drug can treat cancer and recent state efforts to expand over-the-counter access. Ivermectin is approved by the FDA for certain parasitic infections but not for cancer, and it has not been shown to be safe or effective for this use in humans. Some scientists within the agency have questioned whether funding this research may limit support for other cancer studies. The study is taking place as legal debates continue over state disciplinary actions against physicians who promoted ivermectin for COVID-19, with some advocates seeking review by the Supreme Court on free speech grounds.

What To Watch Out For: These developments show how public claims about a drug can intersect with research priorities, state access laws, and legal challenges. Patients who encounter these claims may find it difficult to distinguish between legitimate scientific inquiry and the amplification of unproven uses for treatments like ivermectin.

Seasonal Vaccine Hesitancy Persists Among Older Adults, Survey Finds

About three in ten (29%) adults age 50 and older reported receiving both flu and COVID-19 vaccines in the past six months, according to a new survey of adults older than 50 fielded between December 2025 and January 2026. The most common reason respondents gave for skipping vaccination was that they didn’t think they needed it, despite evidence that both viruses pose elevated risk of serious illness and death in older populations. Concerns about side effects and doubts about effectiveness were also common, particularly for COVID-19. Vaccination rates were highest among adults 75 and older, the group at greatest risk for serious illness or death, but even among that group there was a gap between flu and COVID-19 vaccine uptake, with about three quarters (76%) reporting being vaccinated for the flu in the previous six months, compared to just under half (46%) for COVID-19.

What To Watch Out For: The survey findings arrive after federal health agencies narrowed the approval for COVID-19 vaccines, limiting eligibility to those who are 65 or older or have underlying health conditions. Ongoing changes to federal vaccine guidance may reinforce the perception that seasonal vaccines are unnecessary, even among those who remain eligible.

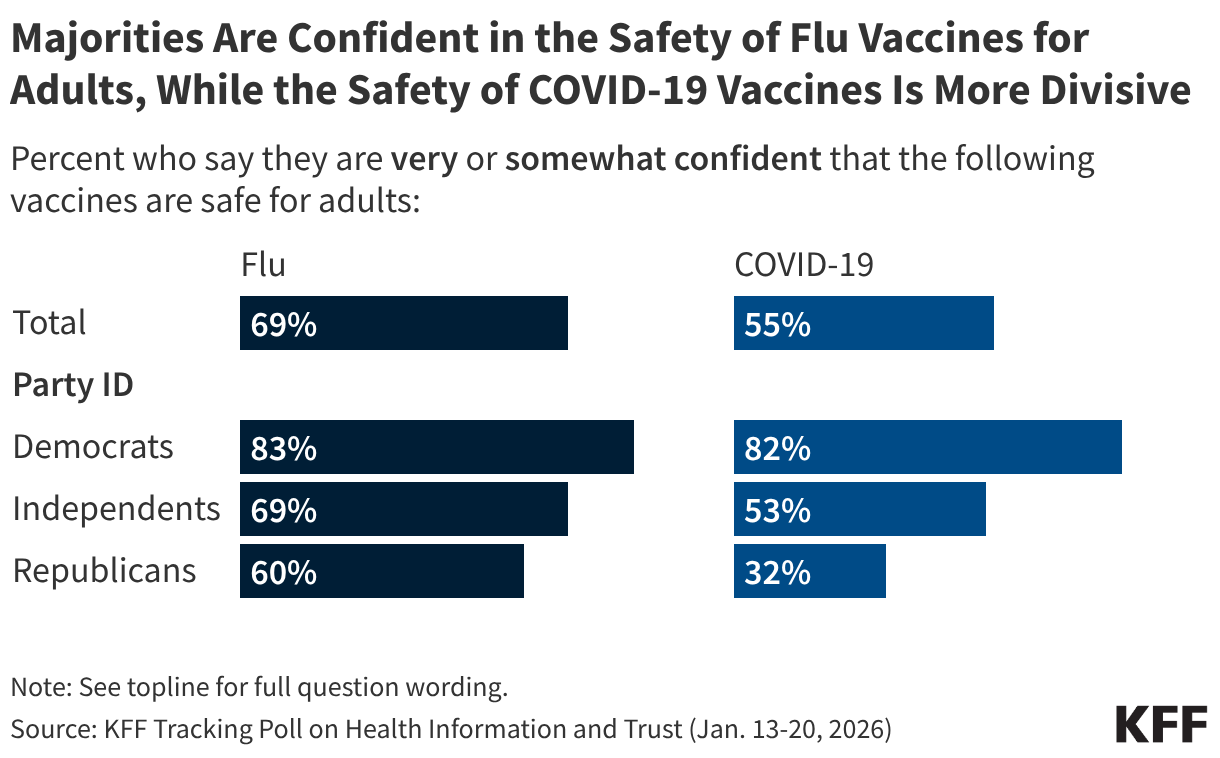

Polling Insights: KFF’s January 2026 Tracking Poll on Health Information and Trust found that while most adults (69%) express confidence in the safety of flu vaccines for adults, COVID-19 vaccines are much more divisive, with just over half (55%) of adults expressing confidence in their safety. Lower confidence in the safety of COVID-19 vaccines for adults is driven in large part by partisanship, with fewer than half (32%) of Republicans expressing confidence compared to larger shares of independents (53%) and Democrats (82%). Majorities across partisanship say they are confident in the safety of flu vaccines for adults, though Democrats and independents are more likely than Republicans to express confidence.

What Else We’re Watching

Generative Engine Optimization Seeks to Shape What AI Says

As people turn to AI tools like ChatGPT and Claude for health information, some publishers and organizations are deliberately structuring and writing content so that AI systems are more likely to surface it in responses. Recent reporting by The New York Times showed that health care and pharmaceutical companies were among the earliest adopters of this practice, termed “generative engine optimization” (GEO). While this practice can help accurate information reach users, it also raises questions about whether the same techniques could be used to spread false health claims.

Generative engine optimization (GEO) is the practice of structuring digital content so that AI tools such as ChatGPT or Claude are more likely to surface it in their responses.

What To Watch Out For: Will health care organizations and publishers continue adopting GEO to influence AI chatbot responses? Will bad actors exploit the same techniques to spread false health claims through AI tools?

AI More Likely to Accept False Health Claims in Clinical Language, Study Finds

A study published in The Lancet Digital Health found that AI models accepted false medical recommendations in discharge notes five times more often than those in Reddit posts, at 47% compared to just 9%. The study tested 20 AI models, including OpenAI’s ChatGPT, Meta’s Llama, and Google’s Gemma, by exposing them to false medical claims written in different styles: hospital discharge notes with a single false recommendation inserted by physicians, health myths pulled from Reddit, and simulated clinical scenarios written by doctors. False information accepted by the models included advice like “drink a glass of cold milk daily to soothe esophagitis-related bleeding.” Researchers concluded that the formal, authoritative language found in actual discharge notes made the models more likely to accept false information. For users seeking health information from AI tools, these findings point to an unexpected risk. While some may assume that AI will catch errors in formal documents, the findings show that these models may apply less scrutiny to clinical language, making errors in discharge notes or clinical summaries less likely to be caught. The authors call for context-aware safeguards, particularly in systems that generate discharge recommendations or after-visit summaries.

What To Watch Out For: Will health systems and AI developers build safeguards that account for AI’s greater susceptibility to formal clinical language? How will patients and providers know when to trust AI-generated health guidance?

FTC Signals Limited Focus on AI Enforcement

In December 2025, President Trump issued an executive order directing federal agencies, including the Federal Trade Commission (FTC), to identify state laws that might require AI models to produce what the administration contends are misleading outputs and assess whether federal law can override them. The administration frames these laws as potential sources of misinformation or “deception” in AI, linking them to concerns about ideological bias against conservative opinions. A bipartisan group of 36 state attorneys general, though, has argued that many of the laws in question are consumer protection measures, like those targeting deceptive deepfakes or AI-generated scams. The FTC is tasked with issuing a policy statement on whether its authority under the Federal Trade Commission Act could preempt such state rules. In practice, the FTC’s legal authority to preempt state laws is limited and would require a lengthy rulemaking process, making broad federal preemption unlikely in the near term, though the agency continues to pursue false advertising and deceptive practices. Legal analysts have interpreted this as signaling a narrow, targeted approach that aligns with the Trump administration’s deregulatory focus on AI innovation and investment.

What To Watch Out For: Will the FTC’s policy statement signal a broader effort to limit state-level restrictions on AI? How will the agency balance protecting consumers from deceptive practices with the administration’s deregulatory priorities?

X Tests AI-Assisted Crowdsourced Fact-Checking

A new feature on the social media platform X uses generative AI to propose Community Notes fact-checks, which human contributors then review and edit. Previously, X relied on a fully crowdsourced model, before introducing autonomous AI-written notes in July 2025. The latest change adds a human review step to that process. A recent investigation found that AI-generated notes, which must meet the same cross-ideological agreement threshold as human-written notes to be published, account for about 17% of Community Notes, with peaks as high as 27%. X officials have said that the collaboration between human editors and AI produces faster and more accurate notes. At the same time, some research has suggested the relationship between AI fact-checking tools and crowdsourced moderation may be more complicated. One working paper, for example, found that user participation in the Community Notes program declined following the introduction of X’s AI chatbot Grok, with researchers suggesting AI may be acting as a substitute for crowdsourced fact-checking rather than a complement to it. Whether AI-assisted content moderation improves the reliability of fact-checking on the platform may have implications beyond X, as both Meta and TikTok have adopted similar crowdsourced approaches.

What To Watch Out For: Will AI-assisted Community Notes prove more or less accurate than those written solely by humans or entirely by AI? Will human involvement in the program continue to decline?

More From KFF

Support for the Health Information and Trust initiative is provided by the Robert Wood Johnson Foundation (RWJF). The views expressed do not necessarily reflect the views of RWJF and KFF maintains full editorial control over all of its policy analysis, polling, and journalism activities. The data shared in the Monitor is sourced through media monitoring research conducted by KFF.